|

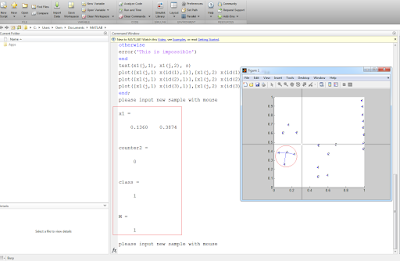

weights of shape (n_queries, n_features), or (n_queries, n_indexed) if metric = ‘precomputed’ Number of neighbors to use by default for kneighbors queries. KNeighborsClassifier ( n_neighbors = 5, *, weights = 'uniform', algorithm = 'auto', leaf_size = 30, p = 2, metric = 'minkowski', metric_params = None, n_jobs = None ) ¶Ĭlassifier implementing the k-nearest neighbors vote. adjusted_mutual_info_score ( y, estimator. targetĭef bench_k_means ( estimator, name, data ) : estimator. show ( ) Full Code import numpy as npĭigits = load_digits ( ) data = scale ( digits. title ( 'K-means clustering on the digits dataset (PCA-reduced data)\n' 'Centroids are marked with white cross' ) plt. scatter ( centroids, centroids, marker = 'x', s = 169, linewidths = 3, color = 'w', zorder = 10 ) plt. plot ( reduced_data, reduced_data, 'k.', markersize = 2 ) # Plot the centroids as a white X centroids = kmeans. Paired, aspect = 'auto', origin = 'lower' ) plt. imshow ( Z, interpolation = 'nearest', extent = ( xx. c_ ) # Put the result into a color plot Z = Z. arange ( y_min, y_max, h ) ) # Obtain labels for each point in mesh. max ( ) + 1 y_min, y_max = reduced_data. For that, we will assign a color to each x_min, x_max = reduced_data. Decrease to increase the quality of the VQ. fit ( reduced_data ) # Step size of the mesh. fit_transform ( data ) kmeans = KMeans ( init = 'k-means++', n_clusters = n_digits, n_init = 10 ) kmeans. labels_, metric = 'euclidean', sample_size = sample_size ) ) ) bench_k_means ( KMeans ( init = 'k-means++', n_clusters = n_digits, n_init = 10 ), name = "k-means++", data = data ) bench_k_means ( KMeans ( init = 'random', n_clusters = n_digits, n_init = 10 ), name = "random", data = data ) print ( 82 * '_' ) # Visualize the results on PCA-reduced data reduced_data = PCA ( n_components = 2 ). adjusted_mutual_info_score ( labels, estimator. adjusted_rand_score ( labels, estimator. Sample_size = 300 print ( "n_digits: %d, \t n_samples %d, \t n_features %d" % ( n_digits, n_samples, n_features ) ) print ( 82 * '_' ) print ( 'init\t\ttime\tinertia\thomo\tcompl\tv-meas\tARI\tAMI\tsilhouette' ) def bench_k_means ( estimator, name, data ) : t0 = time ( ) estimator. seed ( 42 ) digits = load_digits ( ) data = scale ( digits. To see a visual representation of how K Means works you can copy and run this code from your computer. clf = KMeans ( n_clusters = k, init = "random", n_init = 10 ) bench_k_means ( clf, "1", data ) MatplotLib Visualization Example labels_, metric = 'euclidean' ) ) ) Training the Modelįinally to train the model we will create a K Means classifier then pass that classifier to the function we created above to score and train it. def bench_k_means ( estimator, name, data ) : estimator. If you’d like to learn more about what these values mean please visit the following website. It computes many different scores for different parts of our model. To score our model we are going to use a function from the sklearn website. We also define the amount of clusters by creating a variable k and we define how many samples and features we have by getting the data set shape. digits = load_digits ( ) data = scale ( digits. We want to convert the large values that are contained as features into a range between -1 and 1 to simplify calculations and make training easier and more accurate. We are going to load the data set from the sklean module and use the scale function to scale our data down. Importing Modulesīefore we can begin we must import the following modules.

Like the last tutorial we will simply import the digits data set from sklean to save us a bit of time. For this tutorial we will implement the K Means algorithm to classify hand written digits.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed